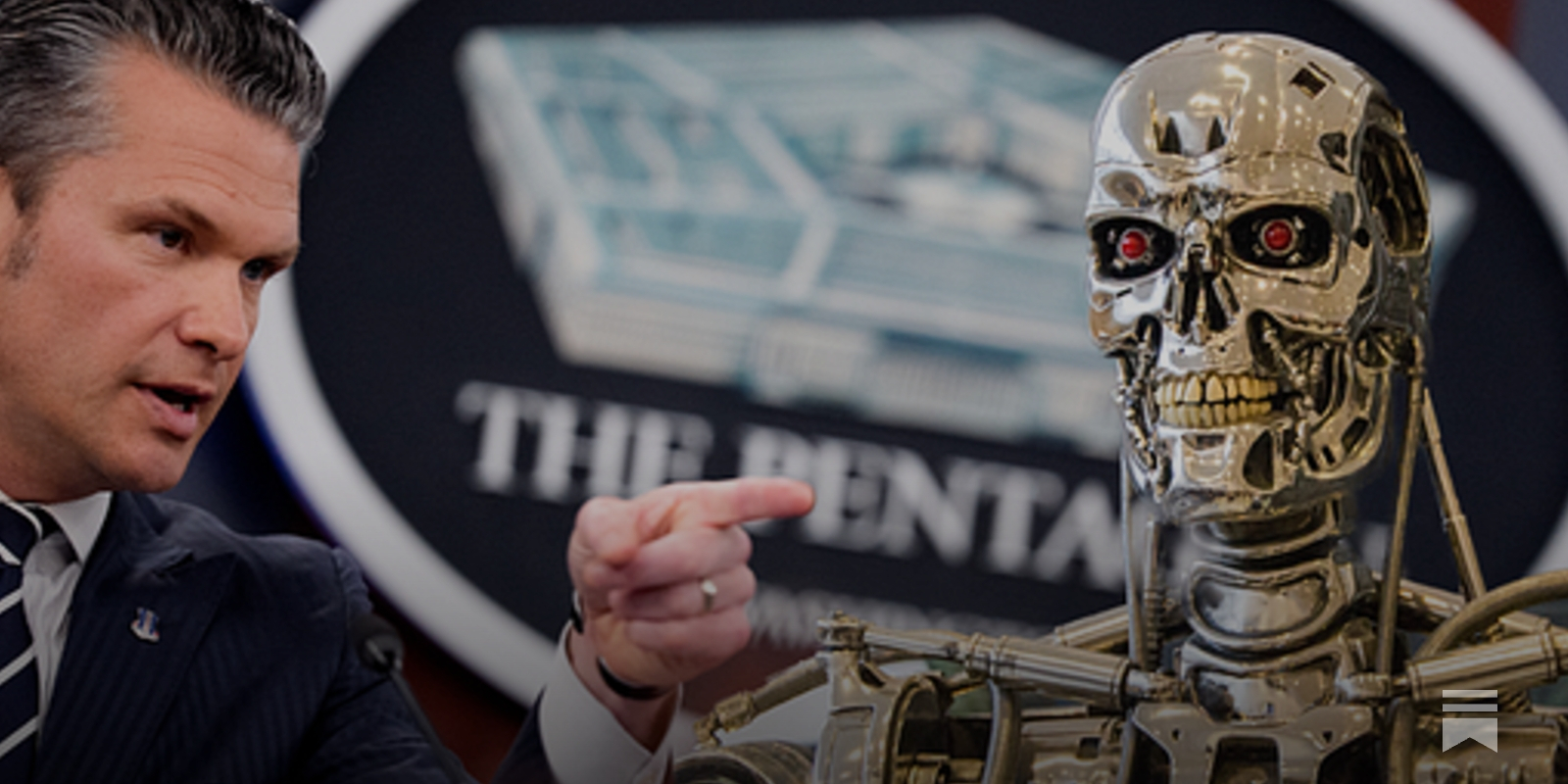

"Defense Sect #PeteHegseth has grown unhappy with two elements of the DoD’s contract with #Anthropic. One, Anthropic won’t let its #AI be used to conduct mass surveillance of Americans. Two, it won’t let the #DoD use it to operate autonomous weapons systems that can identify, track, and kill targets without direct human involvement. To the Defense Dept, the idea that a contractor would be able to tie the military’s hands like this is outlandish"

https://www.thebulwark.com/i/189250040/hegseths-ai-ultimatum

Discussion

Loading...

Discussion

“Anthropic is trying to... put their own guardrails in place in the absence of legislation,” she added.“It should go without saying that #AI #technology should not be making potentially lethal decisions without human involvement. I fear what #America will become if the #DoD is given this unrestricted power.”

...it’s insane that such questions as “how much killing will we let the killer robots do on their own” are being hashed out as back-room handshakes"

https://www.thebulwark.com/i/189250040/hegseths-ai-ultimatum