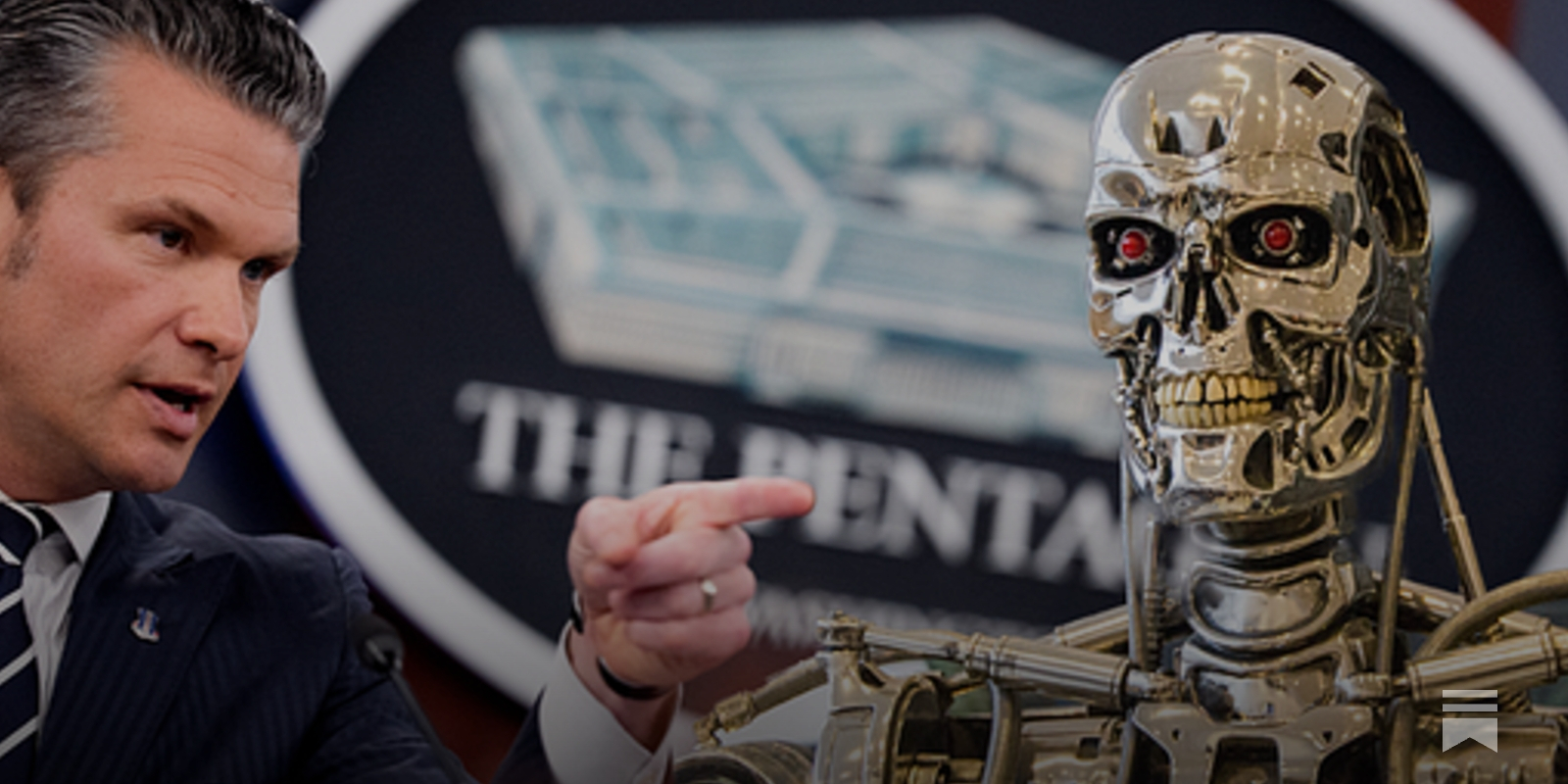

"Defense Sect #PeteHegseth has grown unhappy with two elements of the DoD’s contract with #Anthropic. One, Anthropic won’t let its #AI be used to conduct mass surveillance of Americans. Two, it won’t let the #DoD use it to operate autonomous weapons systems that can identify, track, and kill targets without direct human involvement. To the Defense Dept, the idea that a contractor would be able to tie the military’s hands like this is outlandish"

https://www.thebulwark.com/i/189250040/hegseths-ai-ultimatum

"Defense Sect #PeteHegseth has grown unhappy with two elements of the DoD’s contract with #Anthropic. One, Anthropic won’t let its #AI be used to conduct mass surveillance of Americans. Two, it won’t let the #DoD use it to operate autonomous weapons systems that can identify, track, and kill targets without direct human involvement. To the Defense Dept, the idea that a contractor would be able to tie the military’s hands like this is outlandish"

https://www.thebulwark.com/i/189250040/hegseths-ai-ultimatum